As we ramp up to our first competition at Granite State next weekend, we’ve been making lots of refinements in both software and hardware (plus many hours of driver practice). I’ll focus mostly on software features, others can add on anything I’ve missed.

Many thanks to our friends at Teams 1100 and 2168 for allowing us to visit and practice on their fields! (As well as helping with a few repairs). It was also fantastic to chat with Teams 78 and 7407 on Saturday, and we wish you all the best of luck in the coming weeks.

The Name!

After an internal voting process, we have selected the name of our 2022 robot:

We’re pleased to welcome the latest addition to our Bot-Bot family, along with Bot-Bot Strikes Back (2020/2021), Bot-Bot Begins (2019), The Revenge of Bot-Bot (2018), and of course the original Bot-Bot (2017).

Auto-Drive and Auto-Align

We’ve focused our efforts on two key shots; scoring from directly against the fender and from the line at the back of the tarmac. To help the driver align quickly for those shots, we put together two assistance features.

To align while at the back of the tarmac, we use a fairly traditional “auto-aim” that takes over controlling the robot’s angular movement and points towards the hub. As with our auto-aim last year, this is based on odometry data that’s updated when the Limelight sees the target (see my previous posts for more details). One key improvement we made over our previous auto-aim is that the driver can continue to control the linear movement, allowing them to begin aligning as they approach the line. This means that aligning doesn’t have to be a separate action; once they reach the right distance, they can begin shooting immediately.

The fender shot is trickier, since just pointing at the target isn’t the key issue. We wanted to reduce the amount that the driver needs to maneuver around once they reach the right distance. To solve this, we use an “auto-drive” system that guides the driver on a smooth path towards the nearest fender. The driver remains in control of linear movement while the software handles turning. It points towards a point projected out from the fender 75% the distance of the robot (shown as a transparent robot in the video). We’ve found this system to be especially helpful on the opposite side of the field where visibility is low.

AutoAim.java11 — Traditional auto-aim for the tarmac line shot.DriveToTarget.java11 — Auto-drive for the fender shot.

Vision & Driver Cameras

Our vision system was originally built to use PhotonVision, since it can report the corners of multiple targets to the robot code. All of this extra data feeds into our circle fitting algorithm, which produces odometry data. When it came time to connect our two driver cameras as well, PhotonVision was appealing as it supports multiple simultaneous streams. All three cameras were originally set up to run through our Limelight with PhotonVision.

Unfortunately, our results with this system were less than satisfactory. Here are a few of the problems we encountered:

- PhotonVision appears to be very picky about what cameras it will talk to successfully. It took several iterations of trying different driver cameras to find ones that (almost) worked reliably. Many simply wouldn’t connect properly (e.g. showing up as multiple devices where none worked correctly), or caused PhotonVision to freeze up. Even after lots of experimentation, one of our driver cameras never ran above 3 FPS.

- Also, the Limelight hardware doesn’t seem to be capable of running three streams simultaneously at full speed (where one involves vision processing to find the target). All of the streams ran at fairly low framerates. This problem seems predictable in hindsight, but definitely prevents us from using one device for everything.

- Even with no driver cameras connected, we’ve encountered repeated issues with PhotonVision. The stream often starts at the wrong resolution, covering the cameras briefly can sometimes cause target tracking to fail until we flip into Driver Mode, and most significantly it often fails to connect to the RIO at all despite extensive experimentation with network settings.

While many of these issues could be worked around with enough effort, the Limelight 2022.2.2 update now provides the corner data our algorithm requires. We’ve been very satisfied with the reliability of the stock Limelight software over the past couple of years, so we’ve switched back for the time being. Supporting this in robot code was trivial since all of the interaction with PhotonVision is abstracted to an IO layer. We just wrote a Limelight implementation and were tracking again without touching the rest of the code!

For the driver cameras, we mounted a separate Raspberry Pi running WPILibPi. This has been working flawlessly with two camera streams, so we’re feeling much better about our vision setup overall.

LEDs!

We mounted several strips of LEDs controlled by a REV Blinkin. It’s driven over PWM to change patterns and indicate robot state (e.g. intaking, two cargo are held, aligned to target, etc). Here are the classes we’ve written to control it:

BlinkinLedDriver.java— This is a simple wrapper for a Blinkin that includes an enum for all of the available patterns, since it appears a similar class isn’t included in REVLib. This makes pattern definitions much cleaner in the rest of the code (e.g.blinkin.setMode(BlinkinLedMode.SOLID_GREEN)instead ofspark.setSpeed(0.77)).LedSelector.java— This is our class for selecting the current LED state. We didn’t want to set up the LEDs as a subsystem required by commands, since this affects which commands are allowed to run simultaneously. Instead, each command/subsystem writes its own state to this object, which has a prioritized list of which patterns to use (including which to skip during auto). It also supports “test mode,” where a list of all the supported patterns is displayed on the dashboard. We had quite a fun time looking through all of the options to pick the pattern for each state.

During driver practice, we had some issues with the Blinkin failing to drive LEDs and not responding to user input, even after a power cycle. Thus far, these issues have been fixed by a factory reset (or just waiting long enough, apparently?) We’ve still investigating this, but we’re hopeful that we can mitigate these issues.

Driver Practice & Shot Tuning

We’ve spent many hours tuning the fender and tarmac line shots, including several iterations of the shooter design (such as connecting the main flywheel to the top rollers mechanically). Others can add more details about those changes. Below are several videos from driver practice demonstrating those shots.

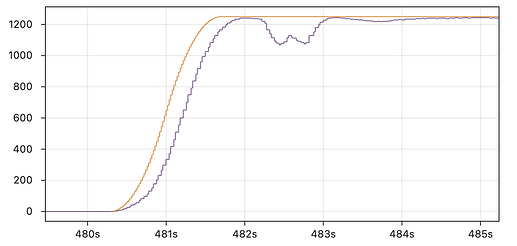

After some tuning of the angle (so that both shots go just over the rim of the hub), bounce-outs seem to be pretty rare. Making reliable shots also depended on getting the velocity PID control of the flywheel working well. Below is a graph of our flywheel speed during a set of shots in auto.

When ramping up, we found it useful to implement a trapezoidal profile to reduce overshoot (this enforces an acceleration and jerk limit).The flywheel is tuned such that it dips to a similar speed for each shot, meaning the arcs are consistent.

Mid-Rung Climb

We’re planning to stick with a simple mid-rung climb for our week one competition, with higher levels to come later. Below is the first test of our climber, which is driven by a position PID controller to extend and retract quickly.

Five Cargo Auto

At last! After running the five cargo auto in simulation or with just an intake for weeks, it’s very satisfying to see the routine working in full. With our first shooter design, we were initially discouraged about whether we could pull off this auto. However, later iterations could shoot far enough back from the hub to make it viable again.

The path has also changed a little bit throughout our testing. Rather than driving backwards before each shot, the first set doesn’t require moving anymore (instead, it angles itself as it intakes, and there’s a turn-in-place to maneuver towards the next cargo). That second shot still moves backwards to avoid a sharp turn while intaking the cargo. The video below shows the full path running in a simulator:

Ultimately, we decided not to use vision data during this routine. We found that the data could sometimes offset otherwise reliable odometry (especially when moving quickly while far from the target). The vision system is still essential during tele-op where precise odometry is harder to maintain.

Another interesting note; we realized that every moment spent at the terminal makes the HP’s job much easier since the timing is less precise. With this in mind, the routine is set up to always finish at exactly 14.9 seconds, with any spare time spent at the terminal. This was very useful as we worked on refining the rest of the path, and we feel that the current version provides a reasonable length of time for the HP to deposit the fifth cargo. We also set up the LEDs to indicate when the HP should throw the cargo (the LEDs weren’t working during this particular run of the five cargo auto, but you can see them in the next video).

The command for our five cargo auto in here 8 for those interested.

…And Other Autos Too

Of course, we also tested the rest of our suite of auto routines from our “standard” four cargo down to the three, two, and one cargo autos. We want to be well prepared with fallbacks in case the more complex autos aren’t functioning reliably (or aren’t needed based on the match strategy).

This is a fun variant of our normal four cargo auto, which starts in the far tarmac and crosses the field to collect cargo from the terminal. This is meant to run alongside a three cargo auto on the closer tarmac, though the strategic use case is admittedly niche. (Also I have a sneaking suspicion that @Connor_H may have violated H507 in this example…)