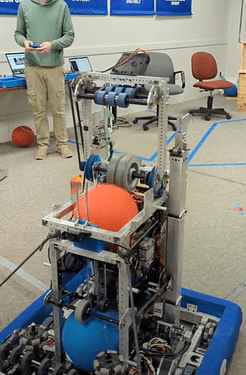

Here’s a quick update with some of our final changes before heading to Houston! During our last event, we noticed that this happened a few times:

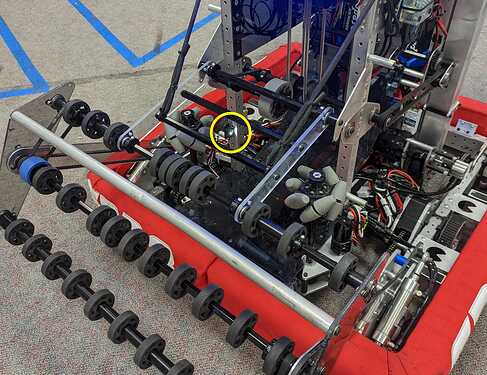

After extensive analysis, the strategy team has concluded that this sort of behavior is apparently “bad.” To prevent this from happening in the future, we added a color sensor along the ball path, connected via a Raspberry Pi Pico using this library.

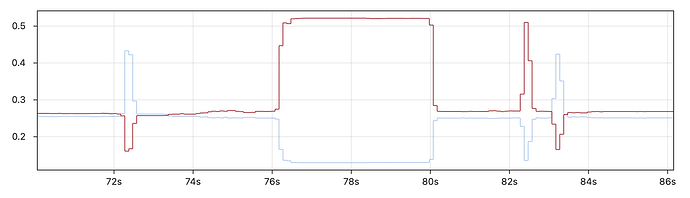

We found that the measurements of color and proximity were quite reliable — changes in lighting made very little difference to the detected color since the ball passes right in front of the sensor. To distinguish between cargo colors, we compare the red and blue channels (if one is greater than 150% the value of the other, a valid color is detected). Here’s some sample data from intaking a few balls:

Based on the detected color, the robot can automatically eject opponent cargo out the shooter (for the first ball) or out the intake (for the second ball). Here’s a demo of the system in action:

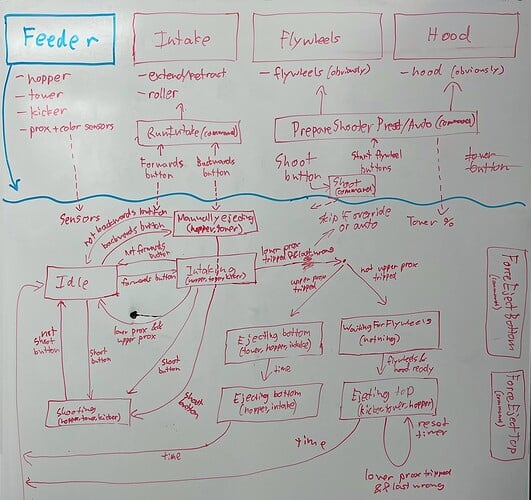

The controls for intaking, shooting, and ejecting are now significantly more complex, so we did a complete refactor of the feeder controls. Everything is built around a central feeder subsystem that controls the hopper, tower, and kicker wheels using a state machine (Feeder.java).

It takes the operator controls, proximity sensors, and color sensor as inputs to decide what the feeder should be doing (including temporarily taking over other subsystems like the flywheel as necessary). Here’s our whiteboard version of the state diagram — apologies for the messiness, but hopefully you get the idea.

This feature also allows the drive team to intake without needing to be as cautious around opponent cargo, which should hopefully decrease our average cycle time.

“All your [cargo] are belong to us”

With a working color sensor, we can now revisit an autonomous routine that we first attempted before Greater Boston. This is our new three cargo auto on the alternative side of the field:

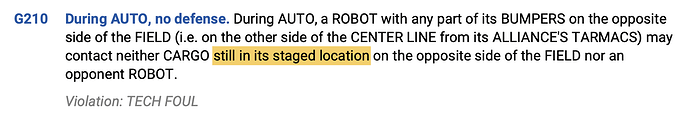

For those who aren’t familiar, this auto steals a cargo from the opposite side of the field based on the fine print in this rule:

By knocking the cargo off of its starting location, the robot can collect it without earning a tech foul. We found that the trickiest part of the auto was lining up the shot from the intake, since we need to hit the cargo on the opposite side directly in the center (otherwise it could roll unpredictably). Even resetting odometry from the hub target wasn’t quite accurate enough to do the job properly. Thus we can return to a quote from one of my earlier posts.

One day we will find a use for this [hood position].

Our solution was to raise the hood to this ridiculous position, spin the robot 180°, use the Limelight to track the cargo, and spin back 180° (relative to the aligned position). We just barely have enough time to make this maneuver, and throughout our testing it has worked much more reliably than any other alignment method.

Once both cargo are collected, the robot uses the color sensor to detect the order of the red and blue balls. This allows it to shoot only the correct color while ejecting the opponent cargo at a low speed. The order tends to be pretty consistent, but the color sensor acts as a backup in case the balls roll differently (such as if we misaligned the intake shot a little bit).

The code for the auto is here: (ThreeCargoAutoCrossMidline.java). I’ll also note that the version of the auto in the video above is a little out of date. We’ve since improved the alignment to the cargo and stopped the blue alliance version from crashing into the wall quite as hard. We’re very excited to add this new routine to our existing suite of autos.

Houston, here we come!